ABSTRACT

Load balancing is the process of reassigning the total loads to the individual nodes of the collective system to make the best response time, good utilization of the resources. Meanwhile Web Server is a computer process that user request to make accessible via HTTP and load balancing is a task that manage the request/nodes from being over load.

Load balancing also can be define as method to distribute the workload on the multiple computer/server through network link to achieve optimal resource utilization which can make maximum throughput and minimize overall response time. It minimizes overall total waiting time of the resources and avoid overload of the resources. Thus, this technique traffic is divide to multiple servers so data can receive and sent without delay. There are many type of algorithm for Load Balancing that provide different benefit such as Random Allocation, Round Robin Allocation, weighten Round Robin Allocation,etc. The increasing of user will lead the many requested job/workload for web server to handle.

The crucial issue in distributed in web server is to divide the workload dynamically. Workload means total processing time required to execute all the task that assign to the machine. Load balancing is the process of shifting the workload among processor to improving the performance of the system. Hence, load balancing is the important factor to optimal utilization of resources which increase the performance of the system and minimization of resource consumption

Load balancing also can be define as method to distribute the workload on the multiple computer/server through network link to achieve optimal resource utilization which can make maximum throughput and minimize overall response time. It minimizes overall total waiting time of the resources and avoid overload of the resources. Thus, this technique traffic is divide to multiple servers so data can receive and sent without delay. There are many type of algorithm for Load Balancing that provide different benefit such as Random Allocation, Round Robin Allocation, weighten Round Robin Allocation,etc. The increasing of user will lead the many requested job/workload for web server to handle.

The crucial issue in distributed in web server is to divide the workload dynamically. Workload means total processing time required to execute all the task that assign to the machine. Load balancing is the process of shifting the workload among processor to improving the performance of the system. Hence, load balancing is the important factor to optimal utilization of resources which increase the performance of the system and minimization of resource consumption

INTRODUCTION

Load balancing is a technique of making group of server join in the same service and do the same work. The goals of load balancing are to increase reliability, maintain stability, availability, improve throughput, optimize resource utilization and provide tolerant capability. Load balancing helps make network more efficient. The main function is to divide the amount of work that a server need to process between two or more servers so that the work will process faster and more efficient for the client. Nowadays, there are many of servers grows and the risk of failure also increases.

There are many option to implement load balancing using software. HAProxy is one of the best load balancing open source software that are fast and reliable solution offering high availability, load balancing and proxying for TCP and HTTP-based application. HAProxy is known to reliably run on linux, Solaris, FreeBSD, OpenBSD, and AIX.

METHODOLOGY

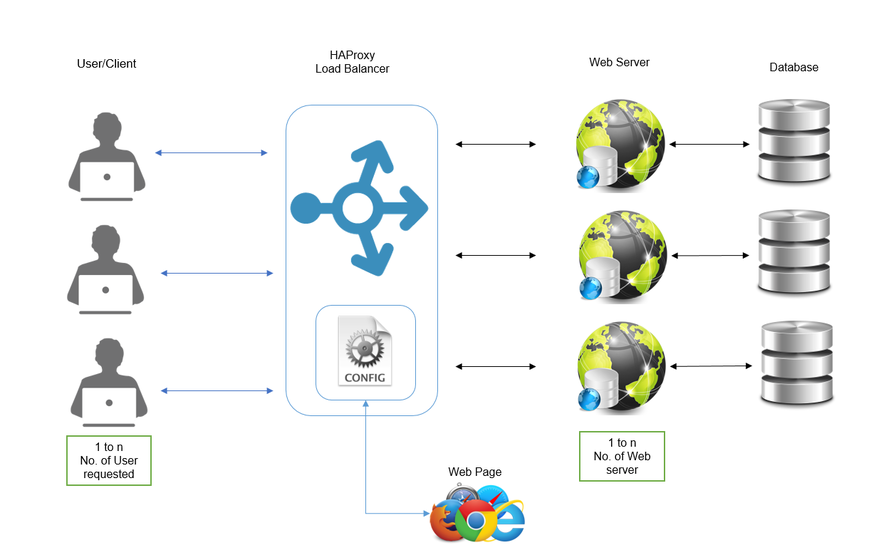

FRAMEWORK

- User will request the job

- HAProxy Load balancing will determine where the job will be distributed based on the configuration / technique that implemented

- Web server provide the resources to the user requested

- Database is collection of data that been stored in this database application

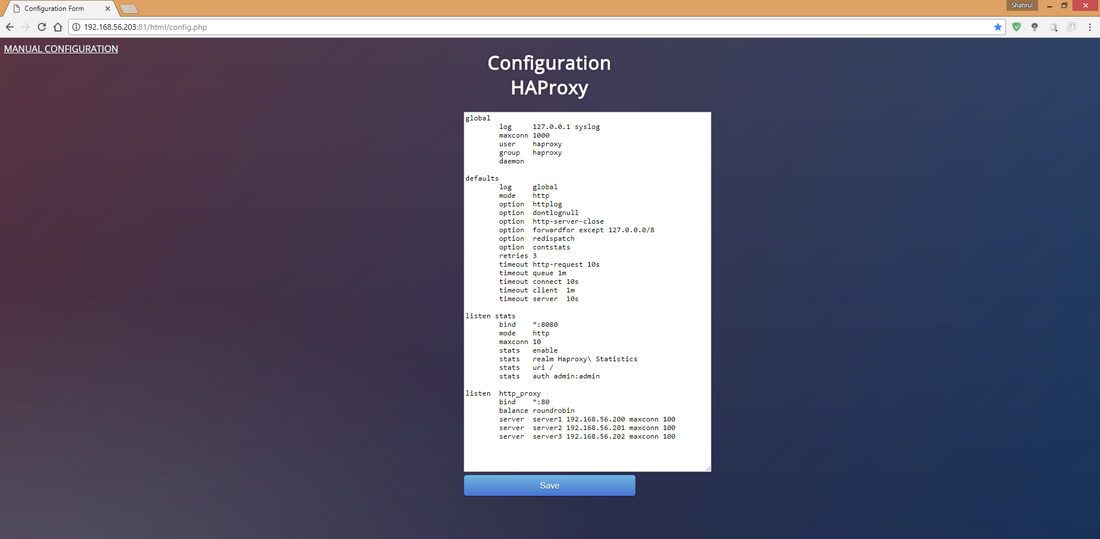

- The Configuration of HAProxy Load Balancing can be open in Web page

- We can configure and updating HAProxy load balancing based on what we need through web pages instead using linux to configured it.

ALGORITHM

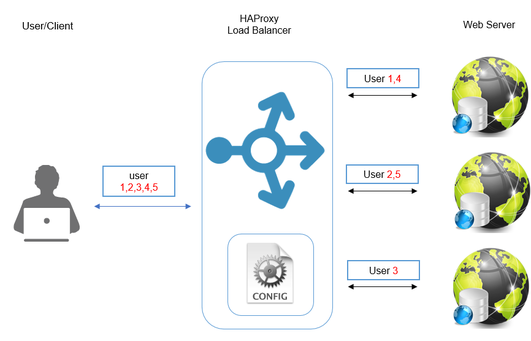

ROUND ROBIN

The algorithm chooses the server sequentially in the list. Once it reaches the end of the server, the algorithm forwards the new request to the first server in the list.

- Let say user request have 5 based on figure and web server have 3 to distribute the workload.

- user 1 will go to server 1. then another request will go to another server based on sequence.

- It will continue to distribute which is user 2 will go to server 2, user 3 to server 3, and the user 4 and 5 will go back to server 1 and 2.

CONCLUSION

|

|